Hi Metabase Team,

I hope you are doing well.

While working with Metabase serialization for exporting and importing collections between databases, I encountered an issue that I would greatly appreciate your help with.

Note: I am paid user of metabase.

I have implemented a custom job/codebase for this process, where I am providing:

-

Old Database ID

-

New Database ID

-

Collection ID (to be migrated)

-

Metabase API Key

For exporting, I’m using API URL:

POST: https://callhub.metabaseapp.com/api/ee/serialization/export?collection={collection_id}

For importing, I’m using API URL:

POST : https://callhub.metabaseapp.com/api/ee/serialization/import

with the x-api-key header and the .tgz export file as form data.

Issues I’m facing:

-

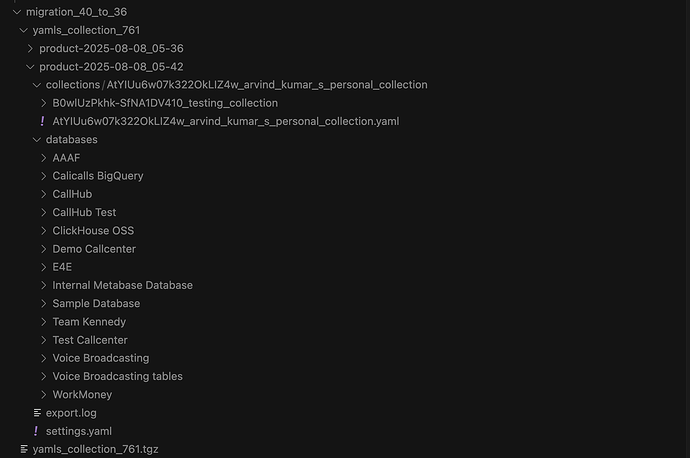

Instead of exporting only the specified collection (with its referenced items and related databases), the API is exporting all available databases. In the attached screenshot, you can see that the export contains multiple databases, even though I only specified a single collection.

-

The export process creates dynamically generated directories (e.g.,

product-2025-08-08_05-36in the screenshot). Because of this, it’s difficult to provide the correct folder path during import.

Request:

-

Could you explain why all databases are being exported instead of only the specified collection with its references?

-

How exactly are these dynamic directories generated, and is there a way to control or predict their names?

-

Could you provide clear guidance or best practices for migrating a single collection from one database to another without exporting everything?

I have attached the screenshot, logs, API responses, and export folder structures for your review.

Your guidance on this would be extremely helpful, as this is currently blocking our migration process.

Best regards,

Arvind Kumar

Software Engineer

CallHub (Gaglers Software Pvt. Ltd.)