I have a connection to BigQuery. I recently upgraded to Metabase v0.36.6 and since then, I haven't been able to connect to one of our databases. Every time I re-authnticate with this DB in BigQuery, it works for a few seconds and then I get this error.

Any help would be appreciated.

Log snipper:

context :ad-hoc,

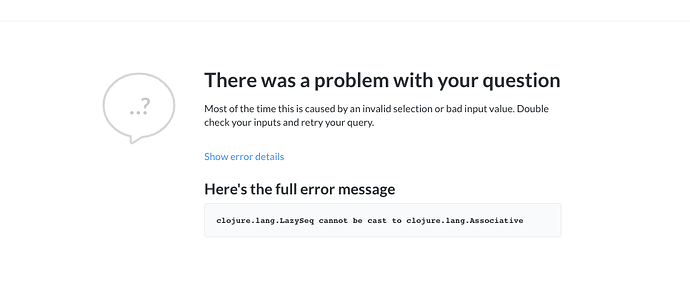

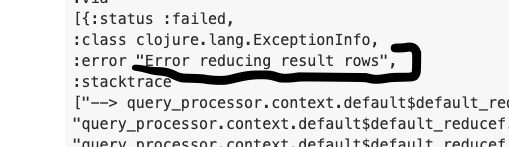

:error "clojure.lang.LazySeq cannot be cast to clojure.lang.Associative",

:row_count 0,

:running_time 0,

:preprocessed

{:database 66,

:query

{:source-table 1729,

:fields

Diagnostic Info:

{

"browser-info": {

"language": "en-GB",

"platform": "MacIntel",

"userAgent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_14_6) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/84.0.4147.89 Safari/537.36 Edg/84.0.522.40",

"vendor": "Google Inc."

},

"system-info": {

"file.encoding": "UTF-8",

"java.runtime.name": "OpenJDK Runtime Environment",

"java.runtime.version": "1.8.0_262-heroku-b10",

"java.vendor": "Oracle Corporation",

"java.vendor.url": "http://java.oracle.com/",

"java.version": "1.8.0_262-heroku",

"java.vm.name": "OpenJDK 64-Bit Server VM",

"java.vm.version": "25.262-b10",

"os.name": "Linux",

"os.version": "4.4.0-1078-aws",

"user.language": "en",

"user.timezone": "Etc/UTC"

},

"metabase-info": {

"databases": [

"mysql",

"postgres",

"bigquery",

"googleanalytics"

],

"hosting-env": "heroku",

"application-database": "postgres",

"application-database-details": {

"database": {

"name": "PostgreSQL",

"version": "10.13 (Ubuntu 10.13-1.pgdg16.04+1)"

},

"jdbc-driver": {

"name": "PostgreSQL JDBC Driver",

"version": "42.2.8"

}

},

"run-mode": "prod",

"version": {

"tag": "v0.36.6",

"date": "2020-09-15",

"branch": "release-0.36.x",

"hash": "cb258fb"

},

"settings": {

"report-timezone": "Europe/Berlin"

}

}

}

on the first post

on the first post