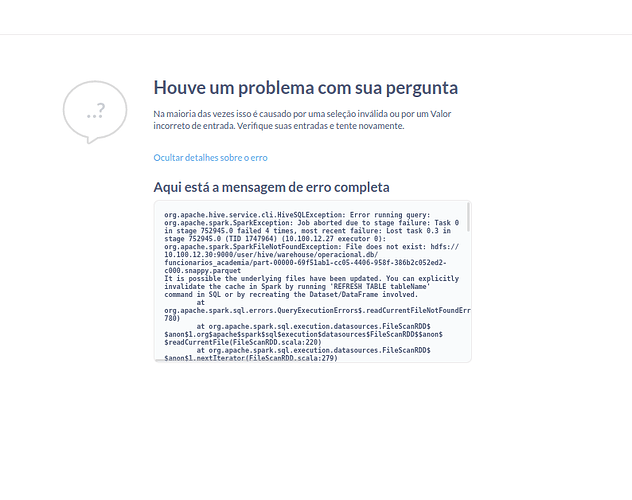

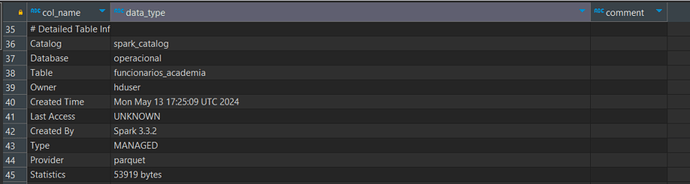

I'm facing the following error in the SparkSQL connection with Metabase. We've created routines that update the file in the Spark HDFS. Thus, when updating the file, the Parquet hash changes, consequently causing it to no longer exist. The table only comes back when we clear the cache of the Hive database. Is there any configuration that can fix this type of error in Metabase? Or any solution in terms of how to write the file or create a table in Hive? I tried creating a managed table, but it didn't solve the issue

Hey Tiburcio

By clearing cache, do you mean running a Refresh call on the table metadata after updating parquet file?

If so, did you end up trying to go the managed table route to prevent performance issues due to having to call a refresh every time the structure changes?

However, that error does look like it’s between the file system and Spark's metadata/cache.