By reconciling queries using Metabase and moving operations closer to the storage layer, it appears to significantly enhance performance while minimizing disk I/O and network transfer of data.

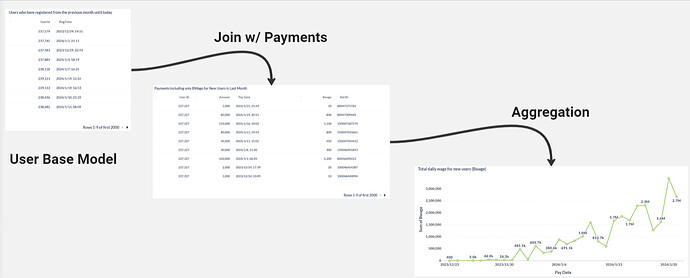

In other words, I’ve been using Metabase for some time and I’ve noticed an interesting behavior. When I chain questions together inside Metabase, the query performance seems to improve significantly. However, when I use a large, optimized native query, the execution time is considerably longer.

This led me to wonder if Metabase has something akin to a Query Planner or an Optimization Engine, similar to what we see in tools like Presto. If so, could you please explain how it works? And why does it seem to perform better with chained questions as opposed to a single large optimized query?

Additionally, I came across this in the Presto documentation:

“Presto defines several rules, including well-known optimizations such as predicate and limit pushdown, column pruning, and decorrelation. In practice, Presto can make intelligent choices on how much of the query processing can be pushed down into the data sources, depending on the source’s abilities. For example, some data sources may be able to evaluate predicates, aggregations, function evaluation, etc. By pushing these operations closer to the data, Presto achieves significantly improved performance by minimizing disk I/O and network transfer of data.”

Does Metabase employ similar strategies?

Any insights would be greatly appreciated.